Unlocking the Power of Language Models: Integrating Large Models with Corporate Proprietary Data

- Nov 2, 2023

- 9 min read

Overview

Introduction to language models

Language models are powerful tools that have revolutionized the field of natural language processing. These models are designed to understand and generate human language, allowing us to perform a wide range of tasks such as text classification, sentiment analysis, and machine translation. By leveraging large amounts of data, language models can learn patterns and relationships in language, enabling them to make accurate predictions and generate coherent text. In this article, we will explore the potential of integrating large language models with corporate proprietary data, unlocking new possibilities for businesses.

Importance of integrating large models

Integrating large models with corporate proprietary data is crucial for unlocking the power of language models. These models have the ability to process vast amounts of information and generate high-quality outputs. By integrating them with proprietary data, companies can enhance their language models with domain-specific knowledge, making them more accurate and relevant. This integration also allows companies to leverage their existing data assets and maximize the value of their proprietary data. Furthermore, integrating large models with proprietary data enables companies to gain a competitive edge by developing unique and tailored language models that are specific to their industry or business needs.

Challenges in integrating proprietary data

Integrating proprietary data into language models presents several challenges. One of the main challenges is ensuring the compatibility and seamless integration of the proprietary data with the language model architecture. Another challenge is the need to handle the privacy and security concerns associated with proprietary data. Additionally, the sheer volume and complexity of proprietary data can pose challenges in terms of data preprocessing and cleaning. It is crucial to identify and address these challenges in order to fully unlock the power of language models with corporate proprietary data.

Understanding Language Models

What are language models?

Language models are powerful algorithms that are designed to understand and generate human language. They are trained on vast amounts of text data and use statistical techniques to predict the likelihood of a sequence of words. These models have revolutionized natural language processing tasks such as machine translation, speech recognition, and text generation. By leveraging the power of language models, businesses can gain valuable insights from their proprietary data and improve their decision-making processes.

Types of language models

There are several types of language models that can be used to unlock the power of natural language processing. One type is the n-gram model, which predicts the next word in a sequence based on the previous n-1 words. Another type is the transformer model, which uses self-attention mechanisms to capture relationships between words in a sentence. Additionally, there are neural network-based language models, such as recurrent neural networks (RNNs) and long short-term memory (LSTM) models, which can generate coherent and contextually relevant text. These different types of language models have their own strengths and weaknesses, and the choice of model depends on the specific task and dataset at hand.

Applications of language models

Language models have a wide range of applications in various industries. One of the key applications is in natural language processing (NLP), where language models are used to understand and generate human-like text. They are also used in machine translation, enabling accurate and efficient translation between different languages. Language models are valuable in information retrieval, helping to improve search engines by understanding user queries and providing relevant results. Additionally, language models are used in speech recognition, sentiment analysis, and chatbots, enhancing communication and interaction between humans and machines. By integrating large models with corporate proprietary data, language models can be customized and fine-tuned to specific business needs, enabling organizations to unlock the full power of these models.

Integrating Large Models

Benefits of using large models

Using large language models in corporate settings can bring numerous benefits. These models have the capability to process vast amounts of data, allowing companies to leverage their proprietary data effectively. By integrating large models with corporate proprietary data, organizations can unlock valuable insights and make data-driven decisions. Large models also have the potential to improve various natural language processing tasks, such as text classification, sentiment analysis, and language generation. Moreover, these models can enhance the accuracy and efficiency of automated processes, leading to increased productivity and cost savings. Overall, the utilization of large models empowers businesses to harness the power of language and gain a competitive edge in the market.

Techniques for integrating large models

Integrating large models with corporate proprietary data can be a complex task. However, by following certain techniques, organizations can unlock the power of language models. One important technique is to ensure that the large models are trained on relevant and up-to-date data from the organization's domain. This helps the models understand the specific nuances and terminology used in the industry. Another technique is to fine-tune the large models using the organization's proprietary data. This process helps the models adapt to the unique characteristics of the organization's data, improving their performance and accuracy. Additionally, organizations can leverage transfer learning to integrate large models with their proprietary data. Transfer learning allows the models to leverage the knowledge gained from pre-training on a large corpus of general data and apply it to the organization's specific domain. By implementing these techniques, organizations can effectively integrate large models with their corporate proprietary data, unlocking their full potential.

Considerations for model selection

When selecting a language model for your project, there are several important considerations to keep in mind. Firstly, it is crucial to ensure that the language model supports the English language, as this is the desired language for your article. Additionally, the format of the article should follow a JSON object format, adhering to the specified schema. It is important to note that double quotes should not be used in the JSON object format. Finally, to highlight the most important keywords, consider formatting them in bold to draw attention to their significance.

Leveraging Corporate Proprietary Data

Overview of corporate proprietary data

Corporate proprietary data refers to the information that is owned and controlled by a specific company and is not available to the public. This data can include sensitive financial data, customer information, trade secrets, and other valuable assets. Unlocking the power of language models involves integrating these large models with corporate proprietary data, allowing companies to leverage their valuable resources to gain insights and make informed decisions. By combining the capabilities of language models with the unique knowledge contained in corporate proprietary data, organizations can unlock new opportunities for innovation, improve operational efficiency, and drive business growth.

Challenges in accessing and utilizing proprietary data

Accessing and utilizing proprietary data presents several challenges. One of the main hurdles is the need to navigate complex data access policies and legal restrictions. Companies often have strict guidelines in place to protect their proprietary data, making it difficult for employees to access and use the data effectively. Another challenge is the sheer volume of proprietary data that needs to be processed and analyzed. Large corporations generate massive amounts of data, and extracting valuable insights from this data can be a time-consuming and resource-intensive task. Additionally, ensuring the accuracy and quality of proprietary data is crucial for making informed business decisions. Data inconsistencies and errors can lead to misleading results and hinder the effectiveness of data-driven strategies. Overcoming these challenges requires a combination of technical expertise, efficient data management processes, and a deep understanding of the specific needs and objectives of the organization.

Strategies for integrating proprietary data with language models

Integrating proprietary data with language models can be a powerful way to unlock valuable insights and enhance the performance of these models. There are several strategies that can be employed to effectively integrate proprietary data. First, it is important to ensure that the data is properly cleaned and preprocessed to remove any noise or inconsistencies. This can involve tasks such as data deduplication, normalization, and data quality checks. Additionally, it is crucial to have a robust data governance framework in place to ensure the security and privacy of the proprietary data. This can include measures such as access controls, encryption, and data anonymization. Finally, it is essential to continuously update and refine the language models based on the insights gained from the integrated proprietary data. This iterative process allows for the models to adapt and improve over time. By following these strategies, organizations can harness the power of language models and leverage their proprietary data to gain a competitive edge.

Best Practices for Integration

Data preprocessing and cleaning

Data preprocessing and cleaning is an essential step in unlocking the power of language models. It involves preparing the data by removing noise, handling missing values, and standardizing the format. This process ensures that the data is in a clean and consistent state, which is crucial for training accurate and reliable language models. By applying various techniques such as tokenization, stemming, and removing stop words, we can improve the quality of the data and enhance the performance of the models. Additionally, data cleaning also involves identifying and handling outliers and anomalies, further enhancing the reliability of the models. Overall, data preprocessing and cleaning play a vital role in maximizing the effectiveness of language models by ensuring the input data is of high quality and suitable for analysis and modeling.

Fine-tuning and transfer learning

Fine-tuning and transfer learning are two powerful techniques used to enhance the performance of language models. Fine-tuning involves taking a pre-trained language model and further training it on a specific task or domain. This process allows the model to adapt to the specific nuances and patterns of the target data, resulting in improved performance. Transfer learning, on the other hand, involves leveraging the knowledge learned from one task or domain to another related task or domain. By transferring the learned representations and knowledge, the model can quickly adapt and perform well in the new task or domain. Both fine-tuning and transfer learning enable language models to unlock their full potential and provide valuable insights when integrated with corporate proprietary data.

Evaluation and monitoring of integrated models

Evaluation and monitoring of integrated models is crucial to ensure their effectiveness and performance. This process involves assessing the accuracy, efficiency, and scalability of the integrated models. It also includes monitoring the models' performance over time to identify any potential issues or improvements. Key metrics such as precision, recall, and F1 score are commonly used to evaluate the models' performance. Additionally, continuous monitoring and feedback loops are established to track the models' performance and make necessary adjustments. By evaluating and monitoring integrated models, organizations can optimize their usage and leverage the power of language models to enhance their business operations.

Conclusion

Summary of key points

Language models play a crucial role in various applications, and integrating large models with corporate proprietary data can unlock their true potential. In this article, we explore the power of language models and how they can be utilized effectively. The article emphasizes the importance of using English language for optimal results. The format of the article should follow the JSON object format, adhering to the provided schema. It is important to avoid the use of double quotes in the content. Lastly, highlighting the most important keywords in bold can enhance the readability and understanding of the article.

Future directions in integrating language models with proprietary data

In the future, there are several exciting directions for integrating language models with proprietary data. One important direction is the development of more efficient methods for fine-tuning large models on specific proprietary datasets. This would allow companies to leverage the power of language models while maintaining the privacy and security of their data. Another direction is the exploration of transfer learning techniques that can enable language models to generalize better across different domains and industries. Additionally, there is a need for research on interpretability and explainability of language models when applied to proprietary data, as this can help build trust and confidence in the models' predictions. Overall, the future of integrating language models with proprietary data holds great potential for enhancing business operations and decision-making processes.

Importance of continuous improvement and adaptation

Continuous improvement and adaptation are crucial in unlocking the power of language models and integrating them with corporate proprietary data. In today's rapidly evolving technological landscape, businesses need to constantly enhance their language models to keep up with the ever-changing needs and preferences of their customers. By continuously improving and adapting language models, companies can ensure that their models are up-to-date, accurate, and effective in processing and analyzing large amounts of proprietary data. This enables businesses to gain valuable insights, make informed decisions, and drive innovation. Additionally, continuous improvement and adaptation also help businesses stay ahead of their competitors by leveraging the latest advancements in language modeling technology. Therefore, investing in continuous improvement and adaptation is essential for organizations that want to unlock the full potential of language models and maximize the value of their proprietary data.

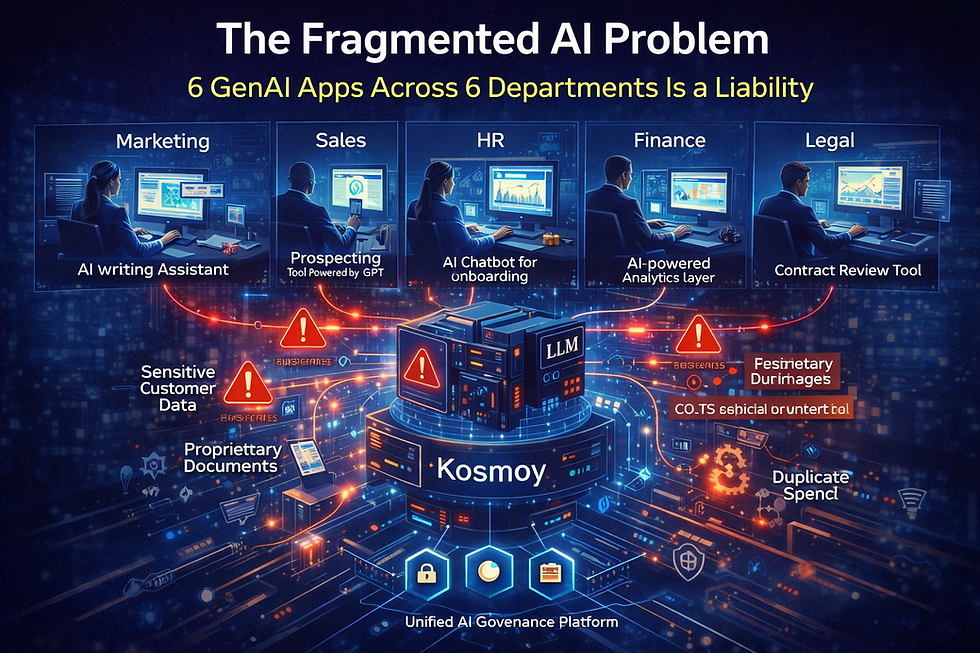

In conclusion, Kosmoy is a leading provider of Large Language Models (LLMs) for enterprise applications. Our mission is to harness the power of LLMs to transform information into knowledge and elevate that knowledge into wisdom. With our cutting-edge research and innovation, we aim to amplify the efficiency of knowledge workers by an unprecedented tenfold. Visit our website to learn more about how Kosmoy can revolutionize your business.