AI INVENTORY · MODEL REGISTRY

Every approved model, in one catalogue.

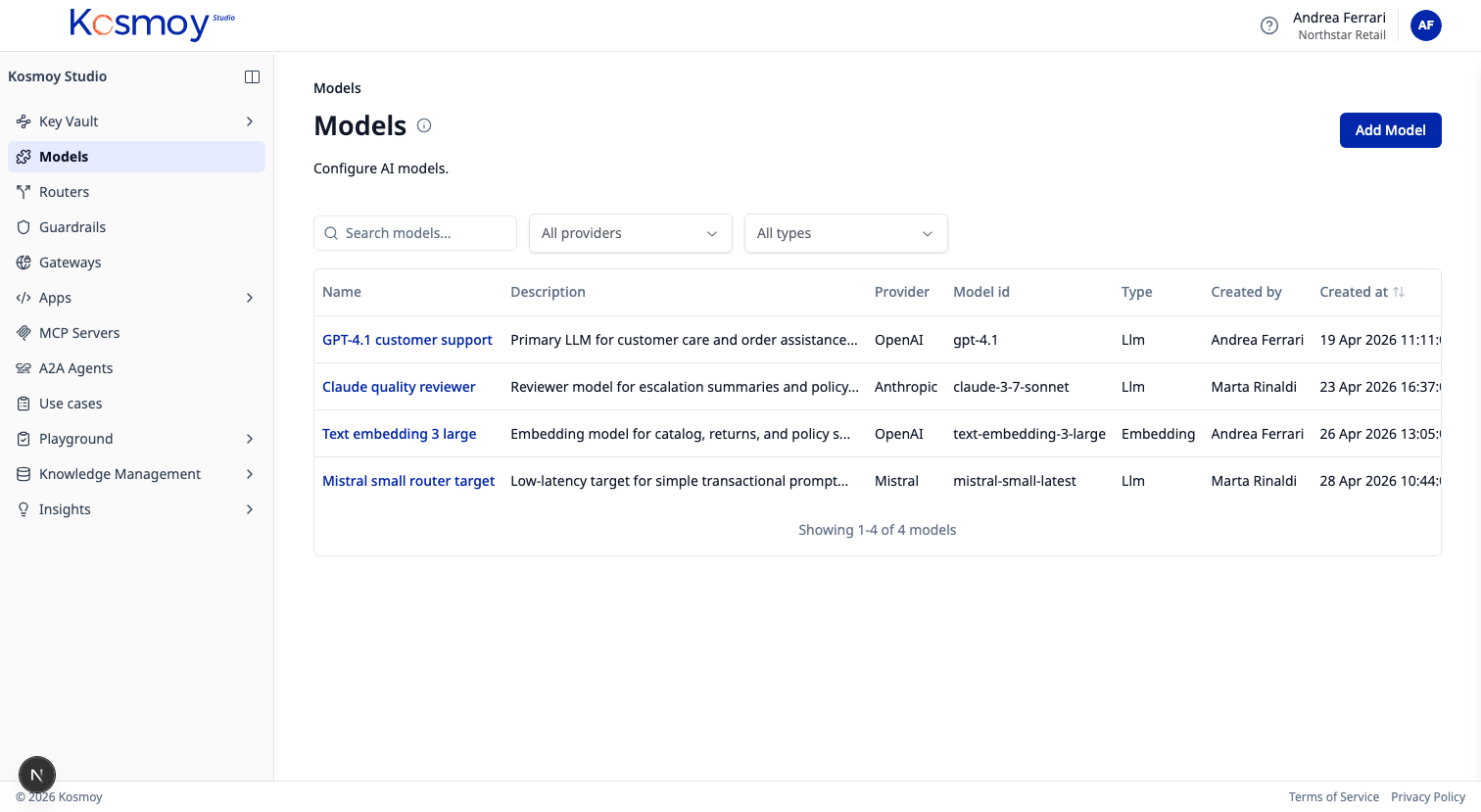

Public LLMs, private LLMs, embedding models, fine-tuned SLMs — provider, version, deployment mode, approval state, allowed use cases.

The Model Registry is the source of truth for which models the central AI team has approved. Every entry connects to a provider, an approval state, a list of allowed use cases and an RBAC scope.

Switching from one model to another is a configuration change in the Gateway, not a code rewrite in every app.

What it does.

Provider-agnostic catalogue

OpenAI, Anthropic, Google, Mistral, Meta, Hugging Face, Azure, AWS, on-prem and SLMs.

Approval lifecycle

Draft → approved → deprecated → retired. Every transition logged.

Allowed use cases

Restrict each model to the use cases it's been approved for.

Version + deployment mode

Cloud-hosted, private, fine-tuned. The Gateway routes accordingly.

Cost metadata

Per-token pricing, latency, region — feeds the Insights dashboard.

Audit trail

Every approval, deprecation and access change is an event in the dossier.

Module questions, answered straight.

Which model providers are supported?

OpenAI, Anthropic, Google, Mistral, Meta, Hugging Face, Azure OpenAI, Azure AI Foundry, AWS Bedrock, on-prem deployments, and fine-tuned SLMs. New providers are added each release.

Can we register private and fine-tuned models?

Yes. Public LLMs, private LLMs, embedding models, fine-tuned SLMs — side by side, with the same approval state and access controls.

How is approval state managed?

Each model has a state — draft, approved, deprecated, retired — and a list of allowed use cases. RBAC determines which teams can call which models.

See the Model Registry.

Walk through approval, lifecycle and per-use-case scoping.