AI GOVERNANCE · LLM ROUTERS

Pick the right model for each prompt.

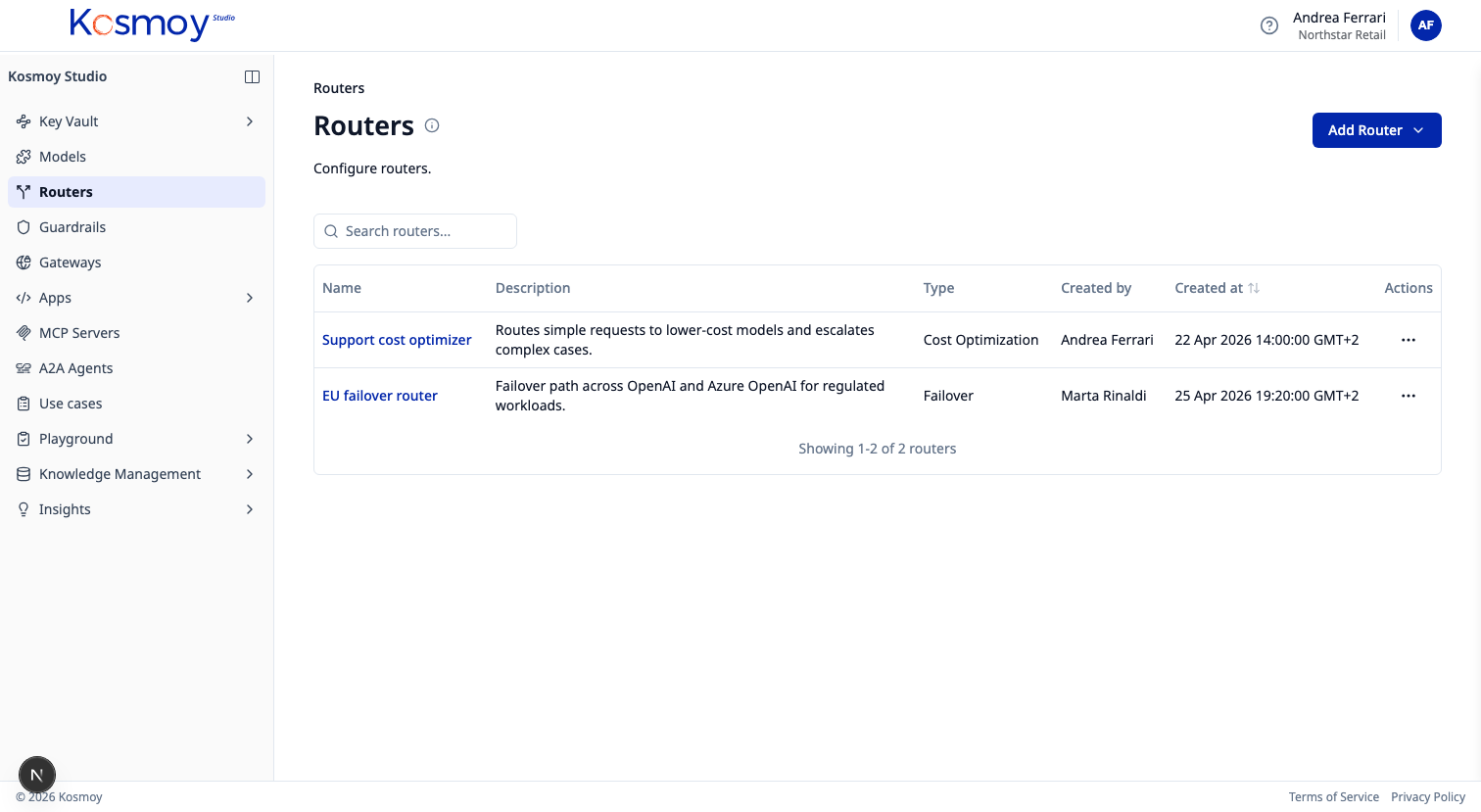

Agentic routing for cost optimisation. Algorithmic routing for fault tolerance and load balancing. One Gateway endpoint.

The price gap between a frontier model and a small one is often two orders of magnitude. The LLM Router decides which one serves each prompt — without changing application code.

Quality is monitored through user feedback and performance metrics; if a cheaper route degrades, the Router reverts.

What it does.

Agentic router

Small fine-tuned judge model picks the cheapest model that fits the task.

Algorithmic router

Primary + fallback chains. Fault tolerance across providers.

Quality feedback loop

User feedback and performance metrics feed routing decisions.

Per-use-case rules

Some use cases pin to a specific model. The Router respects the constraint.

Observable

Every routing decision is an event. Audit and explain after the fact.

Zero app changes

Apps call the OpenAI-compatible Gateway endpoint. The Router is invisible.

Module questions, answered straight.

What's the difference between agentic and algorithmic routing?

Agentic routing: a small fine-tuned judge model reads the prompt and picks the cheapest model that fits the task. Algorithmic routing: rule-based fallback and load balancing across providers when the primary fails.

Does routing affect application code?

No. Apps call the Kosmoy Gateway (OpenAI-compatible API). The Router decides which model serves each prompt. The app sees one endpoint.

How is quality protected when cost is cut?

User feedback and performance metrics feed back into the routing decision. If cheaper-model quality drops, the Router reverts. The optimisation loop is observable.

See agentic + algorithmic routing in action.

Walk through the cost-optimisation loop and fault tolerance across providers.