AI GOVERNANCE · MIDDLE RADAR LAYER

Route every AI call through one policy point.

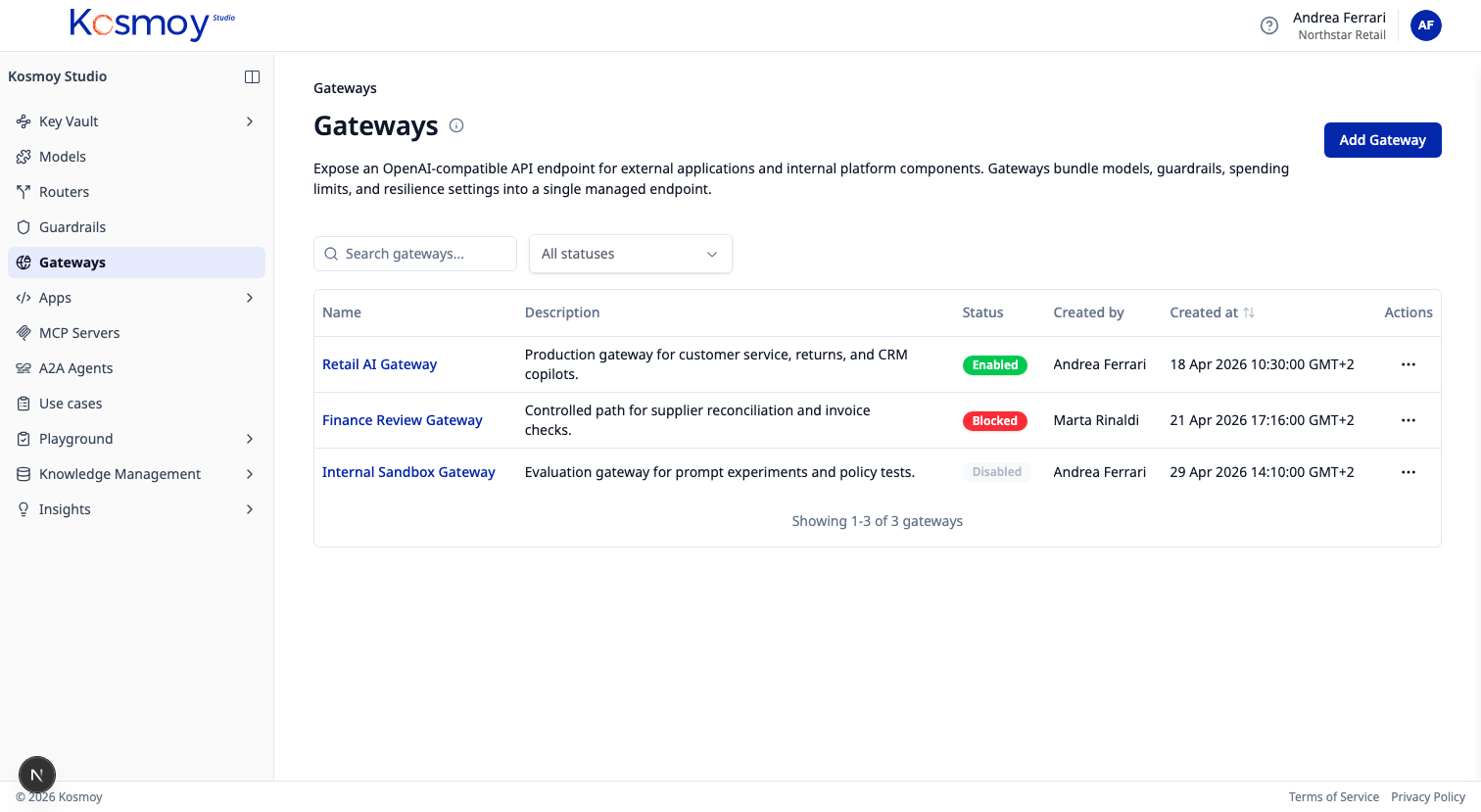

Custom apps, no-code agents, RAG systems, MCP clients, A2A agents, developer coding tools — all through one Gateway. The platform team picks which models are approved, who can call them, which guardrails run, what gets logged, and how cost is attributed.

The first job of an enterprise AI control plane is the policy point. Without one, every team rebuilds the same plumbing — auth, model access, prompt management, logging, guardrails, cost tracking. Same policy, different enforcement. Same risk, different blast radius.

The Kosmoy AI Gateway is that policy point. API keys never reach the application. Developers call Kosmoy. Kosmoy holds the destination credentials in the Key Vault and uses them on the application’s behalf. Every call is authenticated, authorised, guardrailed, routed, logged, costed and attributable. One place. One set of rules.

Coded apps speak the OpenAI-compatible API. A Python service using the openai SDK, a LangChain agent, a custom RAG pipeline — they swap the base URL to Kosmoy and the rest stays the same. Same for LangGraph, LlamaIndex, and any tool that already calls an OpenAI endpoint.

Off-the-shelf software speaks BYOM. ServiceNow Now Assist, Salesforce Einstein Trust Layer, Anthropic’s Claude Code — any platform that supports Bring-Your-Own-Model points at Kosmoy. The vendor keeps its UX. The enterprise keeps its policy point.

The Gateway speaks three protocols outbound. An OpenAI-compatible API to LLM providers (and to vLLM / Ollama / fine-tuned SLMs running on-prem). The MCP protocol to registered MCP servers — internal tools, enterprise APIs, and third-party MCP. The A2A protocol to agent-to-agent runtimes — Kosmoy-Capsuled agents, Azure AI Foundry, Bedrock, and any A2A-compliant agent.

Request lifecycle, end to end

Six capabilities, one path.

Access control

Which users, teams, applications, and agents can reach which models, tools, and routes. Scoped by use case.

Guardrails in the path

Toxic language, PII, prompt injection, EU AI Act risk, custom policies. Inspect prompts and responses before they leave.

Smart routing

Agentic router picks the cheapest model that fits the task. Algorithmic router handles fallback and load balancing.

Logging and evidence

Request metadata, model choice, cost, policy events, feedback, conversation records. Routed to the dossier.

Budgets

Limits by model, app, team, environment, use case. Alerts before spend escapes. Hard caps where the policy demands.

Provider independence

OpenAI, Anthropic, Google, Mistral, Meta, Hugging Face, Azure, AWS, on-prem, fine-tuned SLMs — through one path.

Application → Kosmoy AI Gateway

↓

Auth & RBAC

↓

Guardrails (toxic, PII, injection, AI Act, custom)

↓

Router (agentic + algorithmic)

↓

Model · MCP · A2A · HTTPS

↓

Logs · cost · feedback · evidence → Insights Dashboard

→ AI Act dossierWhat runs inside the Gateway.

- Auth

- RBAC

- Guardrails

- Agentic router

- Algorithmic router

- Logger

Smart routing detail.

Agentic router. A small fine-tuned model (the “judge”) reads each prompt and picks a target model based on the task complexity. Simple prompts go to a smaller, cheaper model; complex prompts go to a frontier model. Quality is monitored through Insights — if feedback degrades, the router weights are adjusted or the affected route is reverted.

Algorithmic router. Primary model + fallbacks. If the primary fails (rate limit, outage, latency spike), traffic flows to the fallback automatically. No application changes.

What the Gateway is not.

- Not the runtime. Models, agents and MCP servers run elsewhere — public providers, private deployments, or Kosmoy Action Capsules. The Gateway sits in front.

- Not a chat UI. Kosmoy Chat is a separate product. The Gateway speaks an OpenAI-compatible API for applications to call.

- Not a vector database. RAG retrievers connect to the customer’s vector DB (Pinecone, Weaviate, Snowflake, Databricks, pgvector). The Gateway routes the LLM call.

Provider integrations

Connect once. Switch any time.

- OpenAI

- Anthropic

- Mistral

- Meta

- Hugging Face

- Azure OpenAI

- Azure AI Foundry

- AWS Bedrock

- vLLM (on-prem)

- Ollama (on-prem)

- Fine-tuned SLMs

Module questions, answered straight.

Does the application have to change to use the Gateway?

Most apps just change the base URL — the Gateway speaks an OpenAI-compatible API. For tools that are already OpenAI-pointing (LangChain, LlamaIndex, custom Python), it's a one-line change.

Can the Gateway run alone, without Action Capsules?

Yes. Phase 2 of adoption is Gateway-alone: governance and observability over external models. Action Capsules (Phase 3) are added when the runtime needs full containment.

How does the Gateway handle MCP servers?

MCP servers register in the MCP Server Registry. The Gateway authenticates the calling application or agent, applies guardrails, logs the call, and forwards the request to the registered MCP server. Internal MCP servers can run in an Action Capsule.

Where do API keys live?

In the Kosmoy Key Vault. Never in the application. Never in user devices. Rotated centrally; audited.

Does it slow down the request path?

Guardrails add latency proportional to the guardrails enabled. Built-in fast-path checks (regex, list-based PII) are sub-10ms. Fine-tuned SLM guardrails are sub-200ms. Frontier-model guardrails (when explicitly enabled) are slower — used only where policy demands them.

See the AI Gateway in action.

Walk through auth, guardrails, routing, logging and budgets — with real provider calls.