AI GOVERNANCE · LLM GATEWAY

Multi-provider model access. One policy point.

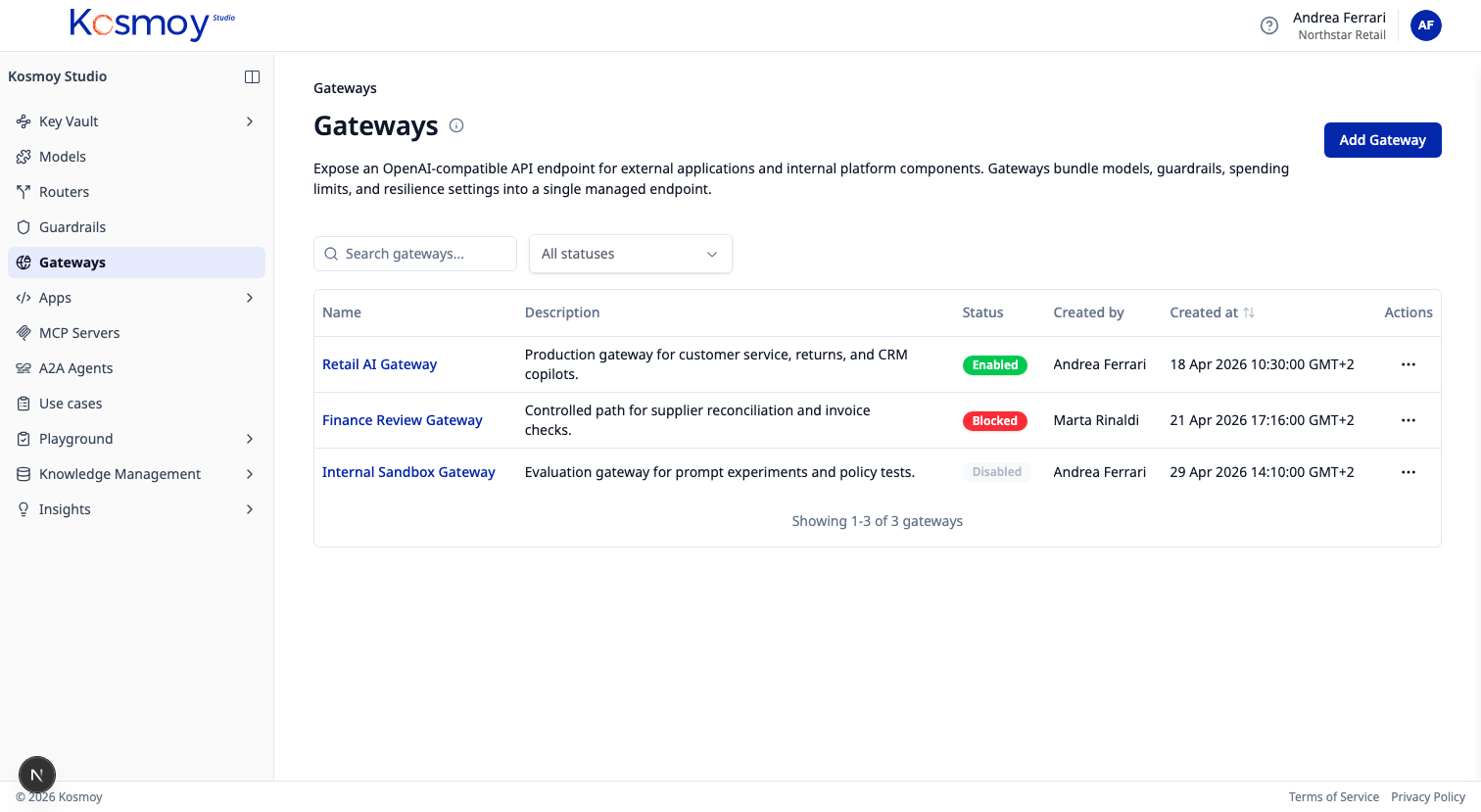

Apps call one OpenAI-compatible endpoint. Kosmoy decides which model serves the prompt, applies guardrails, and logs the call.

The LLM Gateway is what most enterprises adopt first. Centralise model access, swap providers without code changes, apply guardrails uniformly across every app.

Procurement consolidates. Spend visibility lands on day one. The compliance team gets the dossier as the system runs.

What it does.

OpenAI-compatible API

One endpoint for every supported provider. Apps don't notice the switch.

Multi-provider

OpenAI, Anthropic, Google, Mistral, Meta, Hugging Face, Azure, AWS, on-prem, SLMs.

Guardrails in the path

Toxic language, PII, prompt injection, policy compliance. Configured once.

RBAC + budgets

Per-team and per-app access. Soft and hard caps before spend escapes.

Logging + cost

Every call captured with model, latency, tokens, cost. Feeds Insights.

Provider failover

Algorithmic router falls back across providers when one is unavailable.

Module questions, answered straight.

Is this just a model proxy?

No. The LLM Gateway is the policy boundary between your apps and every model — auth, RBAC, guardrails, routing, logging, cost tracking. The OpenAI-compatible API is the carrier; the policy is the product.

Which model providers does it cover?

OpenAI, Anthropic, Google, Mistral, Meta, Hugging Face, Azure OpenAI, Azure AI Foundry, AWS Bedrock, on-prem deployments and fine-tuned SLMs.

Does it work with my existing app code?

Most apps just change the base URL. The Gateway speaks an OpenAI-compatible API. For tools already pointing at OpenAI (LangChain, LlamaIndex, custom Python), it's a one-line change.

See the LLM Gateway in production.

Walk through provider switching, guardrails, RBAC and the cost dashboard.