The EU AI Act is no longer a future concern. It is the law. Signed into force on August 1, 2024, it is the world's first comprehensive legal framework for artificial intelligence — and for CIOs across every industry operating in or with the European Union, the compliance clock is already ticking.

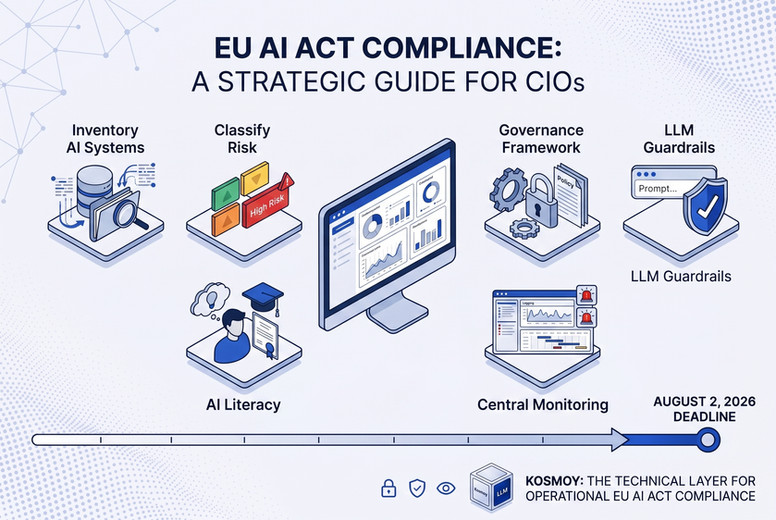

The most critical deadline falls on August 2, 2026 — when obligations for high-risk AI systems become fully enforceable. That may sound distant. But mapping your AI landscape, implementing governance frameworks, updating internal policies, and training your workforce takes months — not days. The time to act is now.

For CIOs, the AI Act isn't just a legal matter to be handed off to compliance. It's a technology governance challenge that sits squarely at the intersection of IT strategy, risk management, and enterprise AI adoption. This article breaks down what the AI Act requires and the concrete steps CIOs need to take right now.

What the EU AI Act Actually Requires

The AI Act takes a risk-based approach, classifying AI systems into four categories: unacceptable risk (prohibited), high risk, limited risk, and minimal risk. The obligations that apply to your organization depend entirely on which category your AI systems fall into.

Prohibited AI systems — including social scoring systems and real-time biometric surveillance in public spaces — have been banned since February 2025. If your organization uses anything in this category, the time to remediate has already passed.

High-risk AI systems are where most enterprise CIOs need to focus. Annex III of the AI Act defines high-risk systems as those used in areas including employment and HR decisions, credit and financial services, education, healthcare, critical infrastructure, and law enforcement. If your organization uses AI in any of these domains — and most enterprises do — strict compliance obligations apply from August 2026.

General Purpose AI (GPAI) models — such as large language models used in enterprise applications — have been subject to their own obligations since August 2025, including technical documentation requirements, transparency disclosures, and, for models posing systemic risk, mandatory adversarial testing and incident reporting.

What Non-Compliance Costs

The AI Act's penalty regime is not symbolic.

- Violations of prohibited practices provisions: fines of up to €35 million or 7% of global annual turnover — whichever is higher.

- Failure to comply with high-risk AI obligations: fines of up to €15 million or 3% of global turnover.

Beyond financial penalties, the AI Act also elevates AI governance to a board-level responsibility. Directors can face personal liability under corporate fiduciary duties if they consciously ignore material regulatory risks. For CIOs, that means AI compliance is no longer a nice-to-have — it's a fiduciary obligation.

6 Things CIOs Must Do Before August 2026

The path to AI Act compliance is not a single project — it's a set of interconnected workstreams that need to be running in parallel. Here is the CIO's action list.

1. Conduct a Full AI System Inventory

You cannot govern what you cannot see. The first step is mapping every AI system in use across the organization — including third-party tools and embedded AI features in SaaS platforms. Many enterprises are shocked to discover how many AI-powered tools are running across departments without central IT visibility. For each system, document its purpose, the data it processes, the decisions it influences, and which department owns it.

2. Classify Each AI System by Risk Level

Once you have a complete inventory, classify each AI system according to the AI Act's risk framework. This requires legal and compliance expertise alongside technical knowledge. AI systems used in HR, performance evaluation, credit decisioning, or any domain listed in Annex III require immediate prioritization. Systems in lower-risk categories still need to be documented and monitored.

3. Implement an AI Governance Framework

For high-risk AI systems, the AI Act mandates a functioning risk management system, data governance controls, technical documentation, and human oversight mechanisms. This isn't a one-time audit — it's an ongoing operational capability. CIOs need to design and implement governance processes that can sustain continuous compliance, not just pass a point-in-time check.

4. Enforce Guardrails on All LLM Interactions

One of the most technically demanding requirements of the AI Act is the enforcement of content guardrails — specifically, the detection and prevention of outputs that violate transparency requirements, expose PII, or produce discriminatory or harmful content. For enterprises running GenAI applications at scale, this requires infrastructure-level controls, not manual review. Every LLM interaction needs to be inspectable and auditable.

5. Build AI Literacy Across the Organization

AI literacy obligations under the AI Act took effect in February 2025. Organizations are already legally required to ensure employees and contractors who operate AI systems on the company's behalf have adequate AI literacy. This means training programs, documentation, and in many cases formal certification — for every role that touches an AI system, not just the data science team.

6. Centralize AI Monitoring and Incident Reporting

The AI Act requires organizations to monitor AI system performance in deployment, track serious incidents, and report them to relevant authorities. This is impossible without a centralized logging and monitoring capability. CIOs need to ensure that every AI system generates observable, auditable data — and that there's a process to act when anomalies are detected.

The Infrastructure Problem: Why Most Enterprises Aren't Ready

The six steps above share a common prerequisite: centralized visibility and control over AI activity across the enterprise. That is precisely what most organizations lack. Departments have deployed AI tools independently, often without IT oversight. LLM interactions are happening in silos, with no unified logging, no shared guardrails, and no way to produce the audit trail the AI Act requires.

This is the infrastructure gap that Kosmoy was built to close. As an enterprise GenAI governance platform, Kosmoy provides the technical layer that makes AI Act compliance operationally viable — not just theoretically achievable.

The Kosmoy LLM Gateway centralizes all LLM interactions across departments into a single, governable infrastructure layer. Every prompt and response is logged, inspected for policy violations, screened for PII leakage, and checked against EU AI Act compliance rules — automatically, in real time, without requiring department-level action. This isn't a post-deployment audit tool; it's a pre-emptive guardrail embedded in the AI infrastructure itself.

Kosmoy Studio enables teams to deploy AI assistants that are pre-configured to comply with governance policies, removing the burden of per-application compliance configuration from individual departments.

The Kosmoy real-time dashboard gives CIOs exactly what the AI Act demands: unified visibility into AI usage, incident logging, compliance status by system, and the operational data needed to demonstrate compliance to regulators.

The AI Act Is a CIO's Problem. And a CIO's Opportunity.

The EU AI Act imposes real obligations with real consequences. But it also creates an opportunity for CIOs to do what many have been trying to do anyway: bring discipline, visibility, and governance to enterprise AI adoption before it becomes ungovernable.

The organizations that will emerge from AI Act compliance with the strongest position are not those that do the minimum to avoid a fine. They are those that use compliance as the catalyst to build the AI governance infrastructure they should have built already — a unified, auditable, controlled environment in which AI can be deployed at scale without creating legal, operational, or reputational risk.

August 2, 2026 is the deadline. But the work starts today.

Ready to make your GenAI infrastructure AI Act compliant? Learn more at kosmoy.com