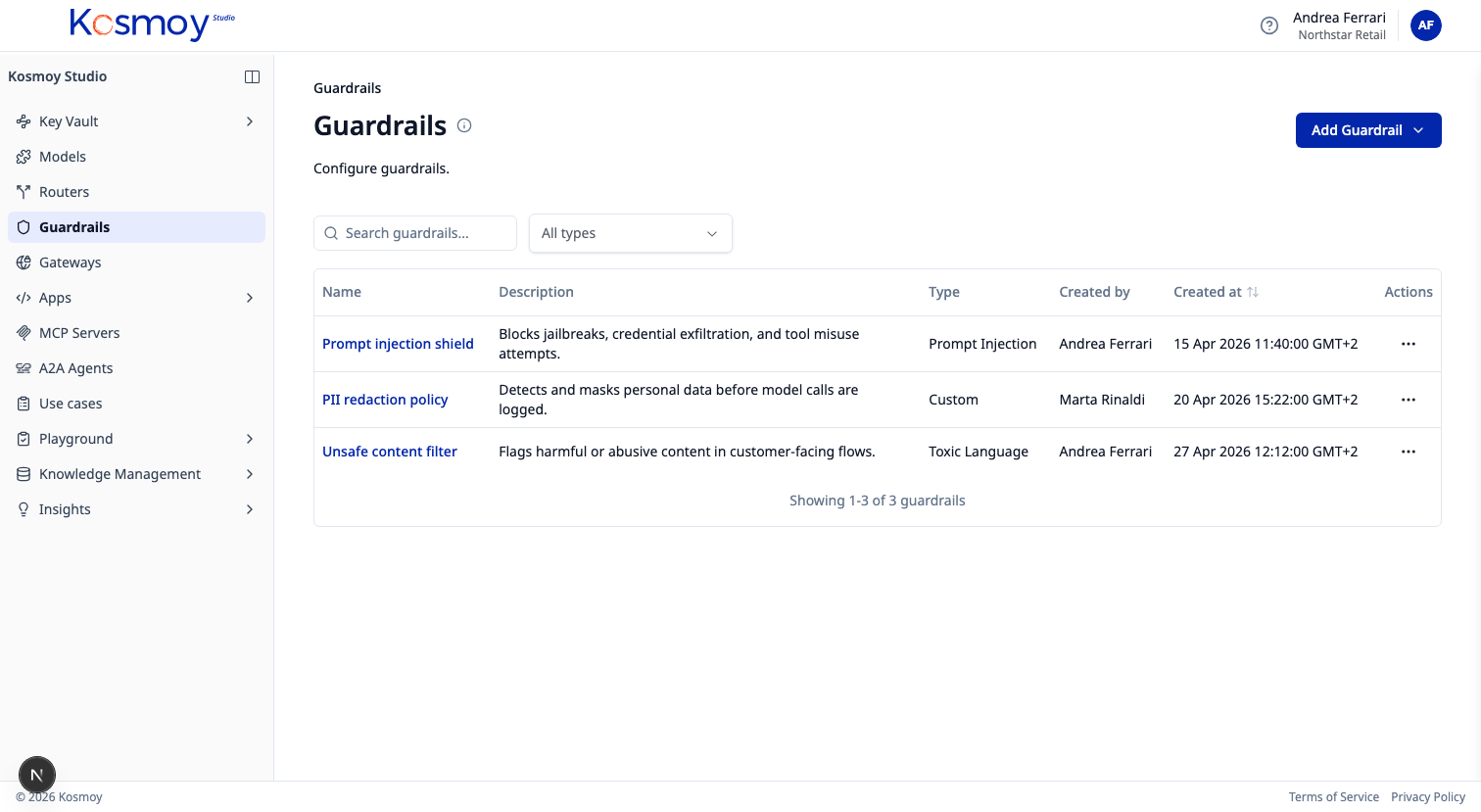

GUARDRAILS

Safety built into

every AI interaction

Purpose-built Small Language Models scan every prompt and response — blocking threats before they reach external LLMs. PII detection, toxicity filtering, and prompt injection defense, all enforced at the gateway.

Safety can't be an afterthought. Kosmoy's Guardrails module runs purpose-built Small Language Models at the gateway level, scanning every prompt and response in real time. PII is detected and redacted before it leaves your perimeter. Prompt injection attempts are blocked before they reach external models. Toxicity filters and custom rules ensure every interaction meets your organization's standards.

PII Detection

Detect and redact personally identifiable information — names, emails, phone numbers, financial data — before it reaches AI models or appears in responses.

Toxicity Filtering

Block profanity, hate speech, violence, and harmful content across configurable categories. Set thresholds per application and team.

Prompt Injection Detection

Automatically detect and block prompt injection attempts — system override claims, hypothetical bypasses, and social engineering tactics.

EU AI Act Compliance

Built-in compliance checks for EU AI Act requirements. Flag high-risk use cases and enforce regulatory boundaries automatically.

Custom Guardrail Rules

Define custom guardrail categories tailored to your business — sensitive topics, competitor mentions, confidential project names, and more.

Protect every AI interaction

See how Kosmoy Guardrails enforce safety policies at the gateway, before threats reach your models.

Or email sales@kosmoy.com.